The data from enterprise customers is clear but conflicted. While 94% of customers say they’re spending more on AI this year, they’re doing so with budget constraints that will steal from other initiatives. As well, the choice of where customers plan to run generative AI is split almost exactly down the middle in terms of public cloud vs. on-premises/edge. Further complicating matters, developers report the experiences in the public cloud with respect to feature richness and velocity of innovation has been outstanding. At the same time, organizations express valid concerns about IP leakage, compliance, legal risks and cost that will limit their use of the public cloud.

In this Breaking Analysis we’ll share the most recent data and thinking around the adoption of large language models and address the factors to consider when thinking about how the market will evolve. As always, we’ll share the latest ETR data to shed new light on key issues customers face balancing risk with time to value.

Enterprise IT Spending Remains Tight

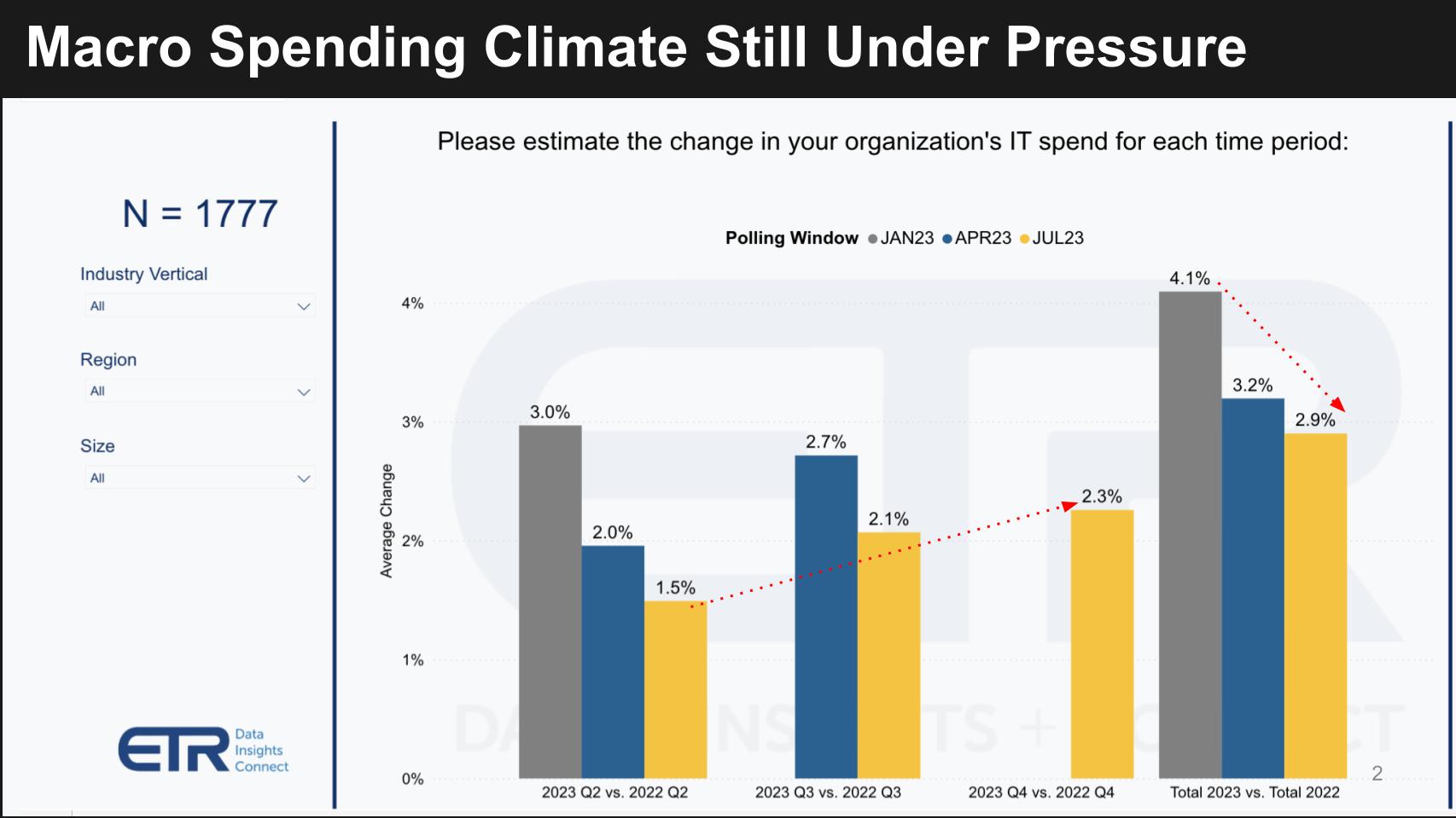

The chart below is from the latest July ETR spending snapshot. The N of 1,777 comprises senior IT decision makers representing more than $750B in spending power.

Senior IT decision makers exited 2022 with an expectation that their budgets would increase between 4-5%. By January that figure was down to 4.1% and despite small sequential increases throughout the year, currently stands at 2.9%, well below initial expectations.

Budget Constraints Force Tradeoffs

The rush to generative AI has caused organizations to reprioritize in a climate where discretionary budgets are not plentiful. As we shared in Breaking Analysis with Andy Thurai late last year, the return on AI investments has been elusive. But The ChatGPT craze forced a top/down mandate from boardrooms and as such has shifted the spending priorities in enterprise tech.

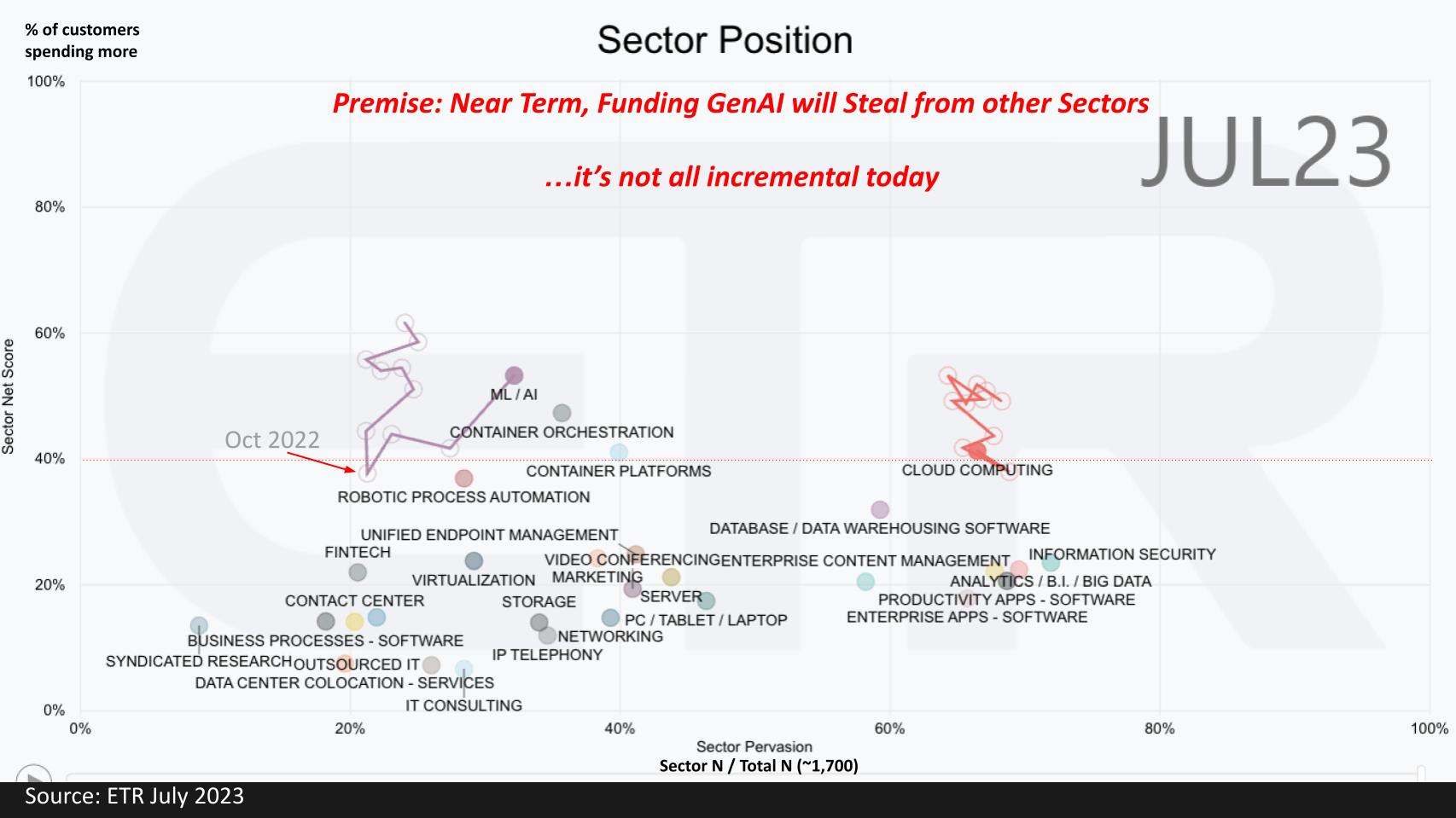

The chart above shows the sectors ETR tracks. Net Score or spending momentum is on the vertical axis and pervasiveness in the survey on the horizontal axis. While all sectors felt the pinch of budget constraints in 2022, AI, which was leading all segments, was suppressed to the point where by October 2022, it fell below the 40% red dotted line – the high water mark for spending velocity. ChatGPT was introduced to the market in November and since then AI spending has accelerated. However, budgets haven’t changed dramatically.

As a result, we’re seeing compression in other sectors suggesting that in the near term, funding for GenAI will be somewhat dilutive to other segments of the market.

Spending on AI is Outpacing Other Initiatives

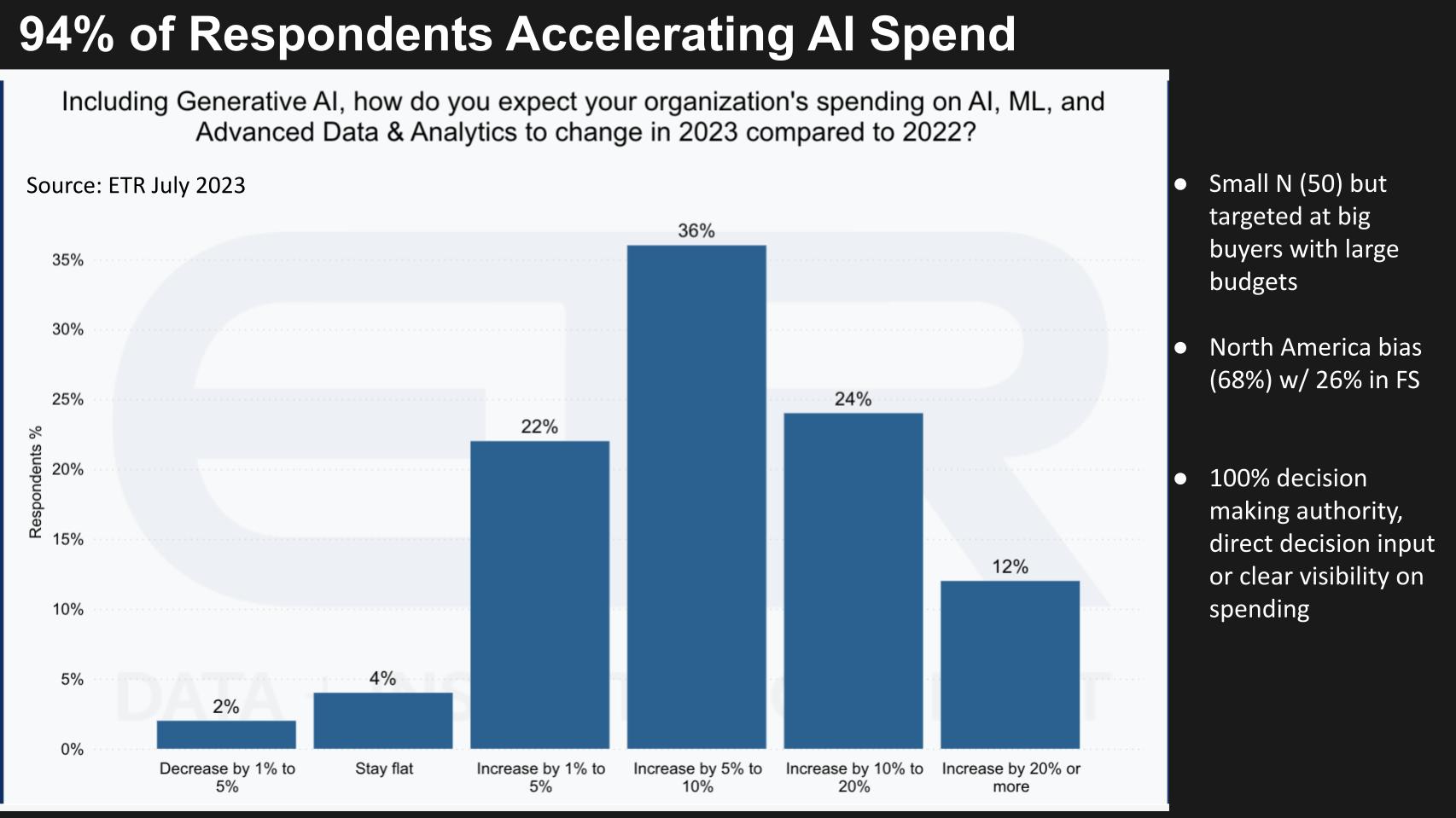

As we mentioned at the top, the data below shows that, for those customers spending actively on generative AI, a huge majority of customers, 94%, report accelerating their AI spend in 2023.

While most customers are reporting a modest spend increase of 10% or less, 36% say their spending will increase by double digits.

Mandate from the C-Suite Conflicts with Risk Appetites

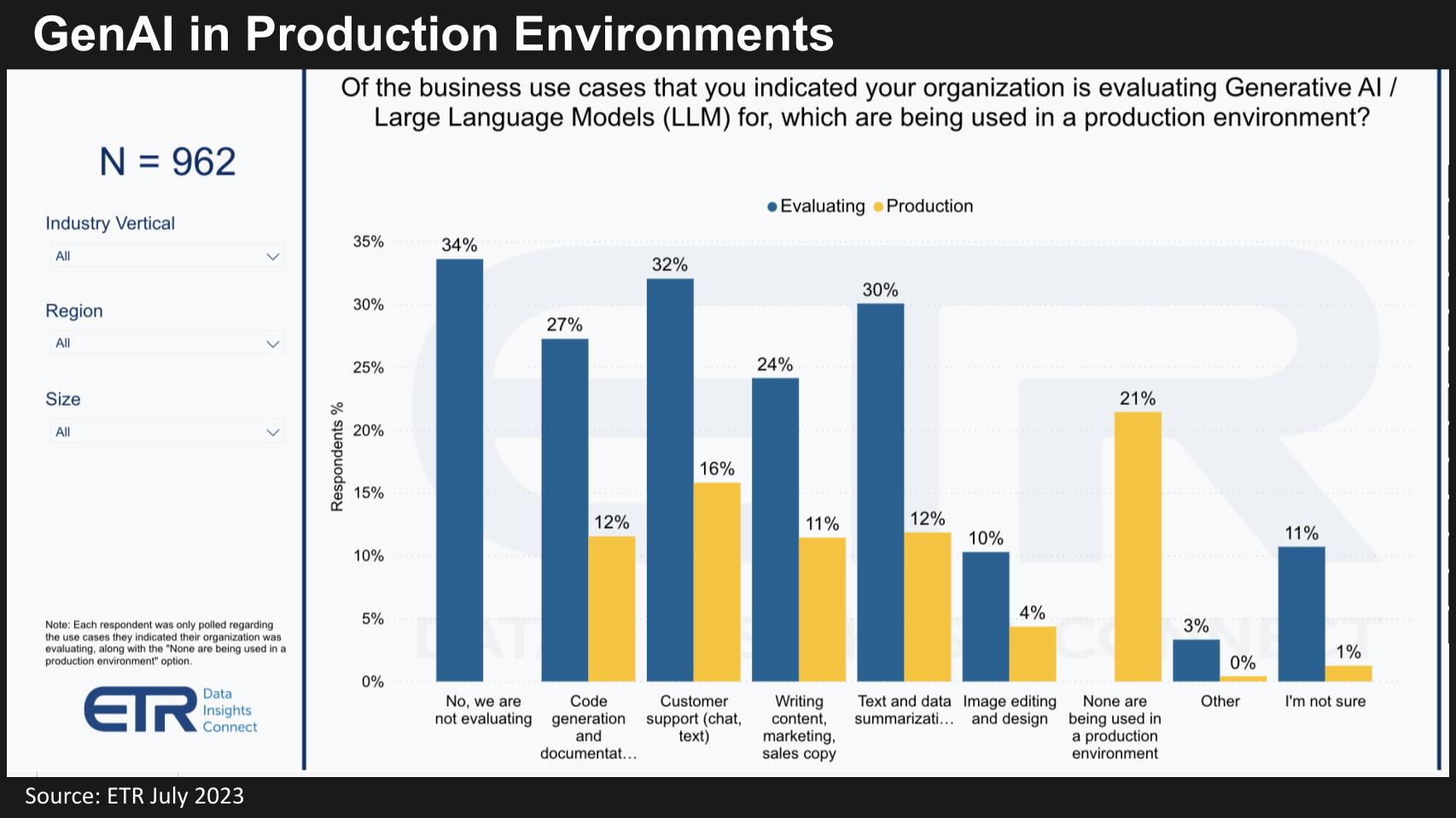

The top down pressures from the corner office to “figure out” generative AI is an urgent matter. But the actual doing is much more challenging. The chart below shows what customers are doing with GenAI in production environments. While 34% say they’re not evaluating, that number is way down from last quarter. And while you may think that 34% is very high, we believe there is a difference in the minds of respondents between playing with GenAI and “actively evaluating.”

Regardless, when you look at what’s actually happening in production environments, two things stand out: 1) Most people are still in eval mode and 2) The use cases are pretty straightforward with chatbots at the top of the list followed by code generation, summarizing text and writing marketing copy as the main areas of interest today.

We believe it’s critical for organizations to truly understand the business case and identify ROI. The big ROI driver is going to come down to minimizing labor costs. You can put this in the productivity bucket but at the end of the day it’s going to be about lessening the need for humans. This doesn’t necessarily mean unemployment will rise – it’ simply means that the #1 driver of value is going to be reducing headcount requirements. And that will most certainly change the skills required for employment.

Organizations Must Evaluate the Risks of GenAI

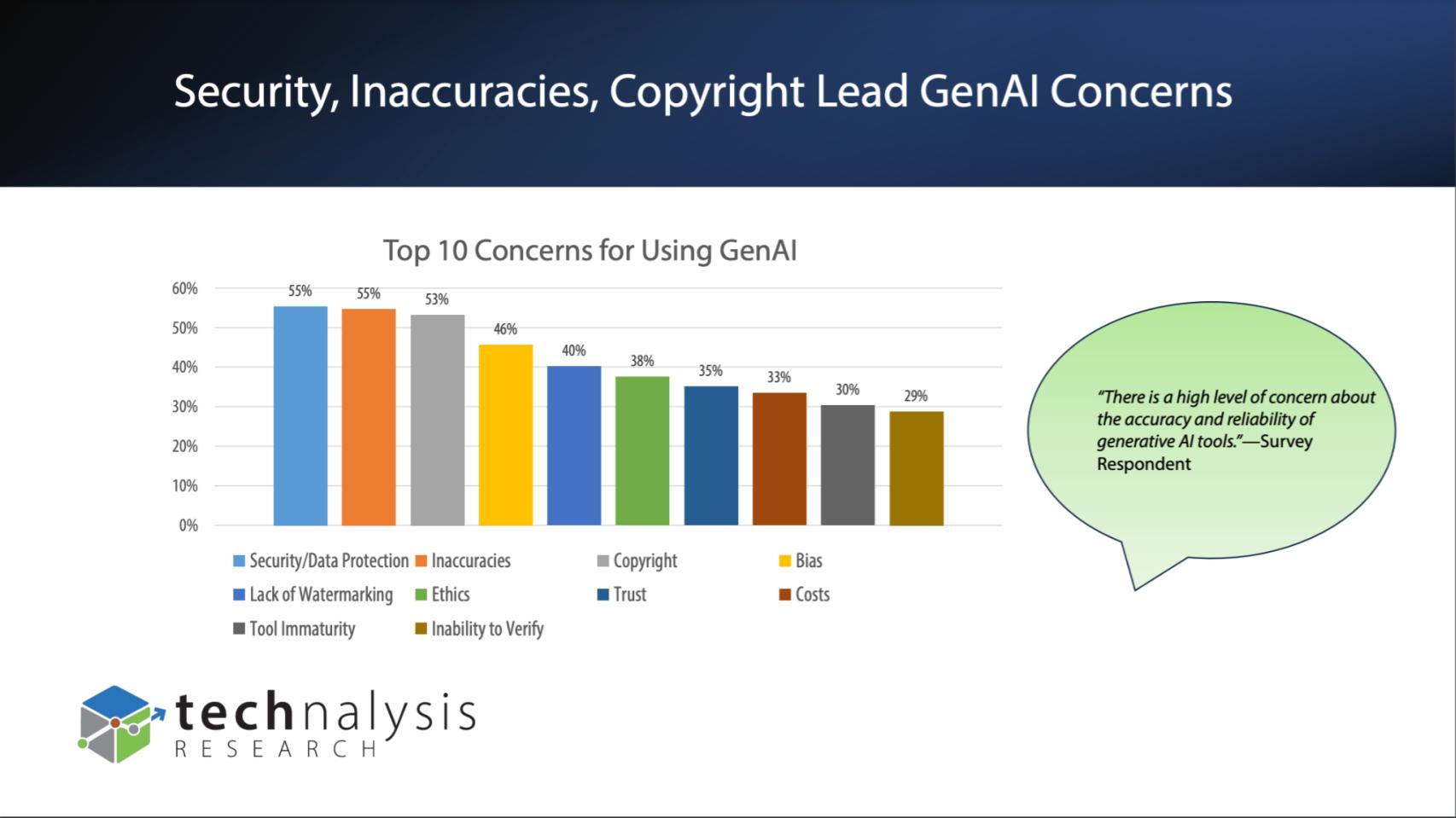

A key challenge facing organizations is, while top down momentum is real, deployment opens a can of risk worms. The slide bel0w is from a recently released study by Technalysis, an independent analyst firm run by analyst Bob O’Donnell. It shares results from 1,000 IT decision makers on their top concerns around GenAI. Compliance, IP leakage, legal concerns such as copyright infringement and bias, data & tools quality, etc.

These are legitimate reasons for being careful with generative AI and how it’s used.

Re-Thinking the Cloud vs. On-Prem Balance

Much of the concerns regarding GenAI risk is leading organizations to say they’re going to do GenAI on prem.

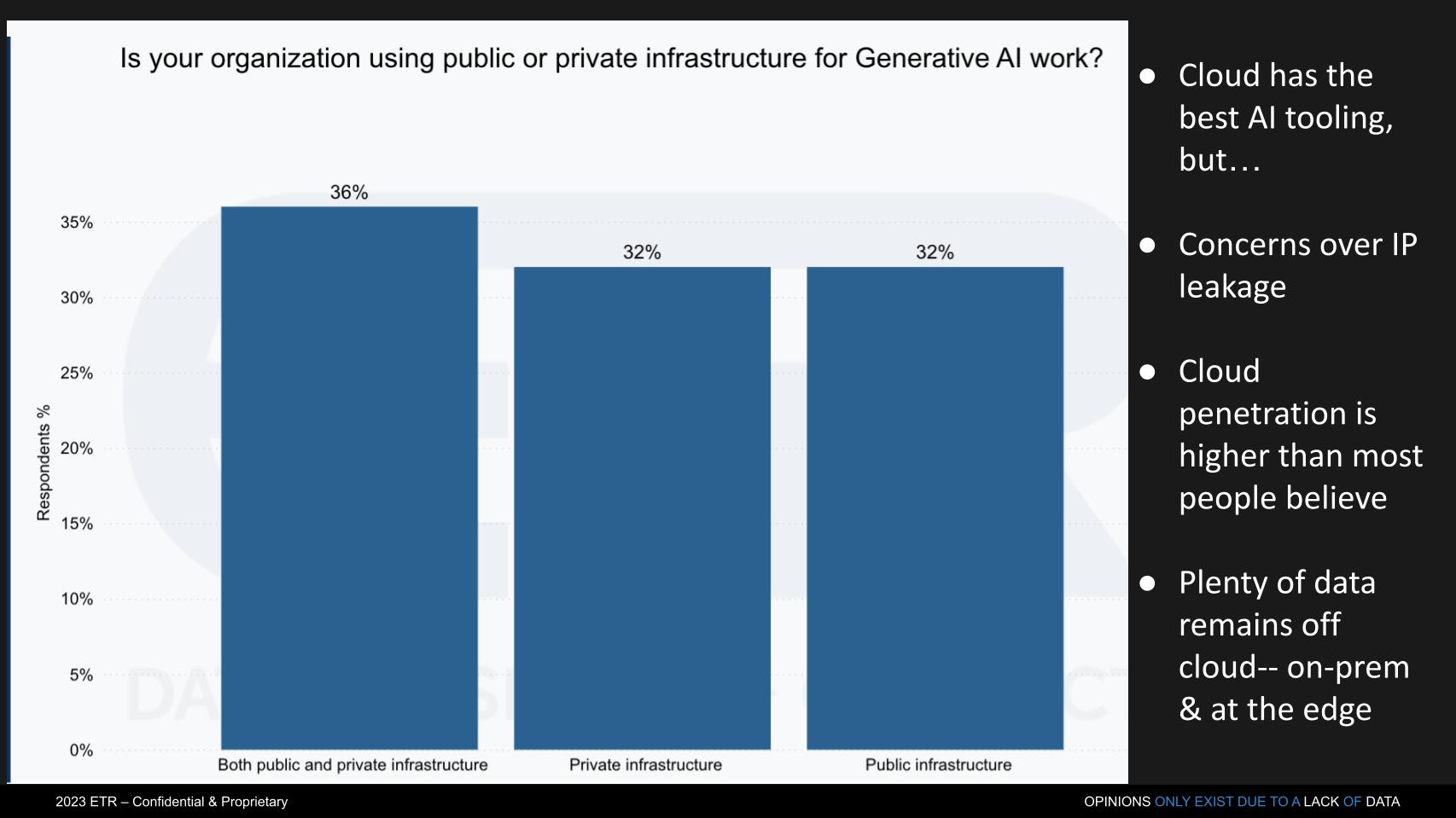

Below is some data from ETR that shows organizations report an identical mix of private and public infrastructure – i.e. public cloud or on-prem / edge deployments. The allure of the cloud is it has the best tooling. But for the reasons mentioned in the Technalysis survey, private infrastructure is expected to be a popular deployment option.

But the the cloud continues to have advantages. There’s now lots of data in the cloud – we think 40-45% of workloads are running in the cloud today – perhaps as high as 50% by next year. As we’ve reported in previous research, the cloud and on-prem are coming more into balance – cloud is still growing much faster – but the business case for cloud migration is not as robust for many legacy applications. We believe much of the cloud growth is new apps or features on top of existing cloud workloads.

On-premises workloads are ripe for AI injection and incumbents like Cisco, IBM, Dell Technologies and HPE are eyeing opportunities and aggressively investing.

Cloud Still has a Massive Advantage

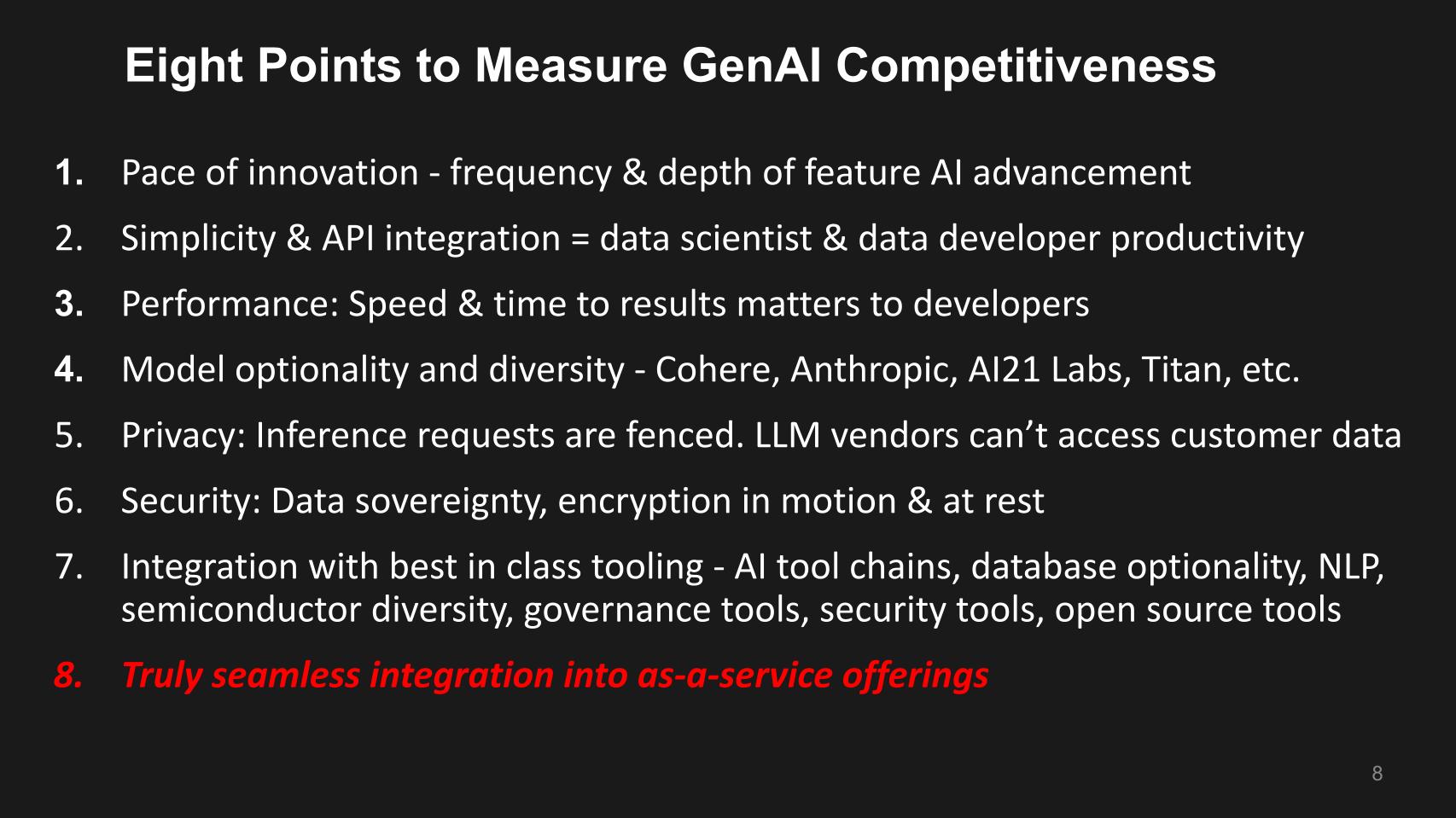

The fact is, in speaking with developers, the cloud is exceedingly capable when it comes to AI. Below are eight points we’ve highlighted that devs tell us the public cloud is delivering on. We believe these points can serve as guideposts for customers when considering the tradeoff in functionality between cloud and on-premises GenAI offerings.

The pace of innovation in AI, building on previous tooling like Amazon SageMaker. The simplicity of integration and the productivity it’s driving…allowing developers to get to an outcome very quickly. We’ve encouraged our community to check out thecubeai.com as an example and sign up for our private beta. Our team built this very quickly – in a matter of weeks using tools readily available on AWS, including open source LLMs, MongoDB, Milvus as our vector database and other cloud tools.

It’s now taking more time to train the model based on the queries we’re getting but the time to MVP was one tenth of a normal software product development cycle.

Our experience underscores #4 above. It’s important – i.e. model optionality and diversity – not only from the cloud vendor but third parties.

The points in #5 and #6 are also critical – the ability to fence off inference requests such that the LLM vendor can’t access any customer data. Richness of security offerings as well are key factors. Capabilities like ensuring data stays in region and encryption for data in flight.

The cloud offers tools that are first rate from silicon all the way through AI tool chains, maximum database optionality, governance choices, identity access, availability of open source tools and a rich ecosystem of partners.

So one has to ask the likes of HPE and Dell with GreenLake and Apex, even though you’re talking about having LLMs or in the case of GreenLake, they’ve announced LLMs as a service, how capable are they and how truly integrated are they into a seamless as-a-service offering?

That being said, the advantage the traditional on-premises firms have is their relationships with customers, strong service organizations and physics. The speed of light and latency will dictate many of the deployment choices. This is something to watch closely. While doing work on-prem can reduce risk and makes a lot of sense, much work needs to be done for incumbent firms to build out offerings and full stack of ecosystem partners.

Comparing Customer Spending Momentum of Cloud vs. Incumbents

The cloud players have stronger business momentum than incumbent enterprise infrastructure players. Despite all the talk of cloud optimization, repatriation and slowing growth, the numbers still dramatically favor the cloud players.

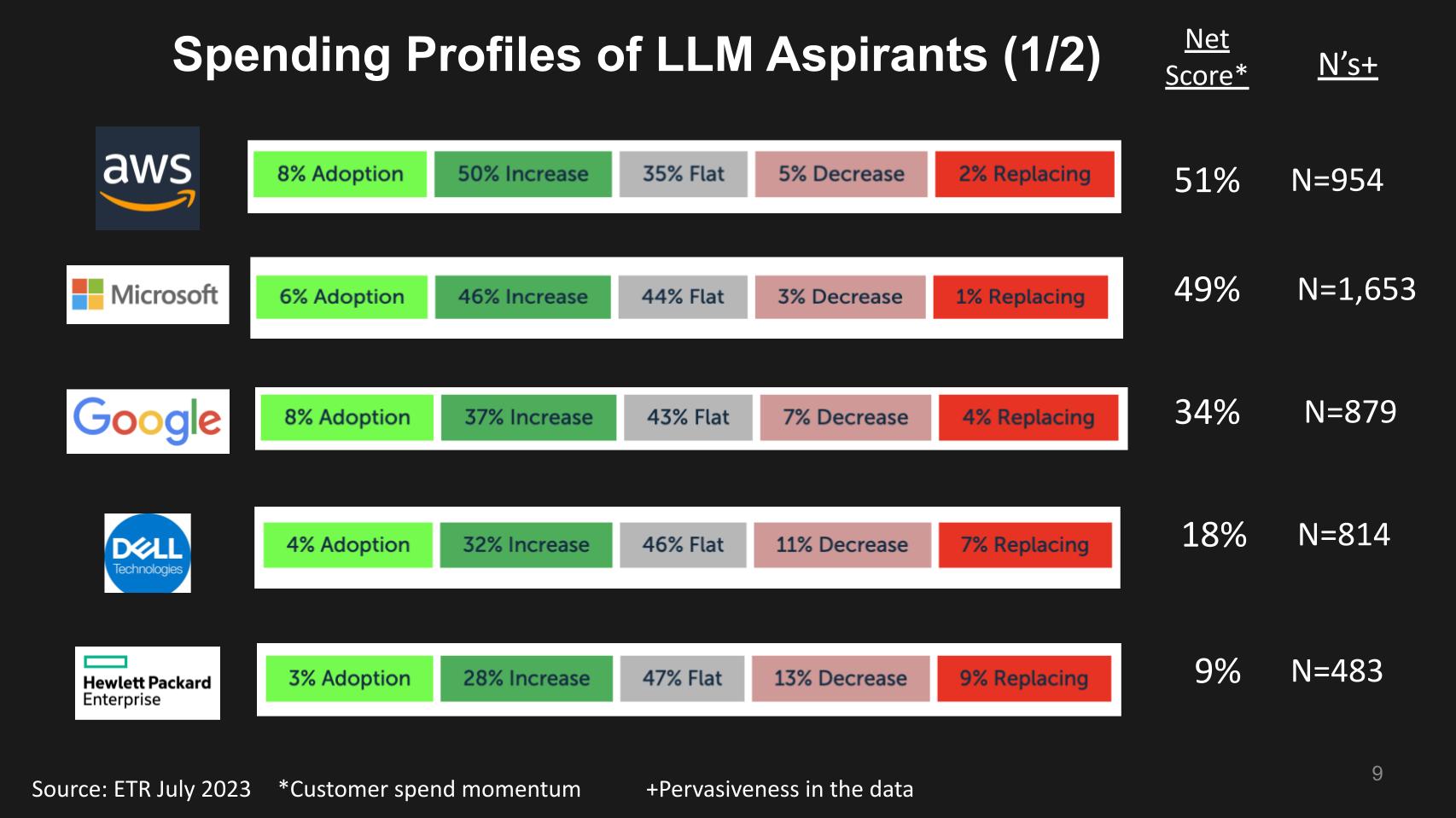

Above is ETR data showing the Net Score breakdown for several aspiring LLM leaders. Net Score is a measure of spending velocity. It tracks the percent of customers that are new logos – that’s the lime green. The forest green represents customers spending 6% or move relative to last year. The gray is flat spending, the pink is spending 6% less or worse and the bright red is churn. Subtract the red from the green and you get Net Score as shown in the column to the right of the bars.

To the right of Net Score we show the number of responses in the survey which is a proxy for market presence. So as you can see, AWS, Microsoft and Google have Net Scores of 51%, 49% and 34% respectively and N’s near or over 1,000.

Compare this to Dell and HPE with Net Scores of 18% and 9% respectively. Dell has a large market presence with an N over 800 and HPE a respectable 483. But the cloud still has meaningfully higher momentum from a spending standpoint.

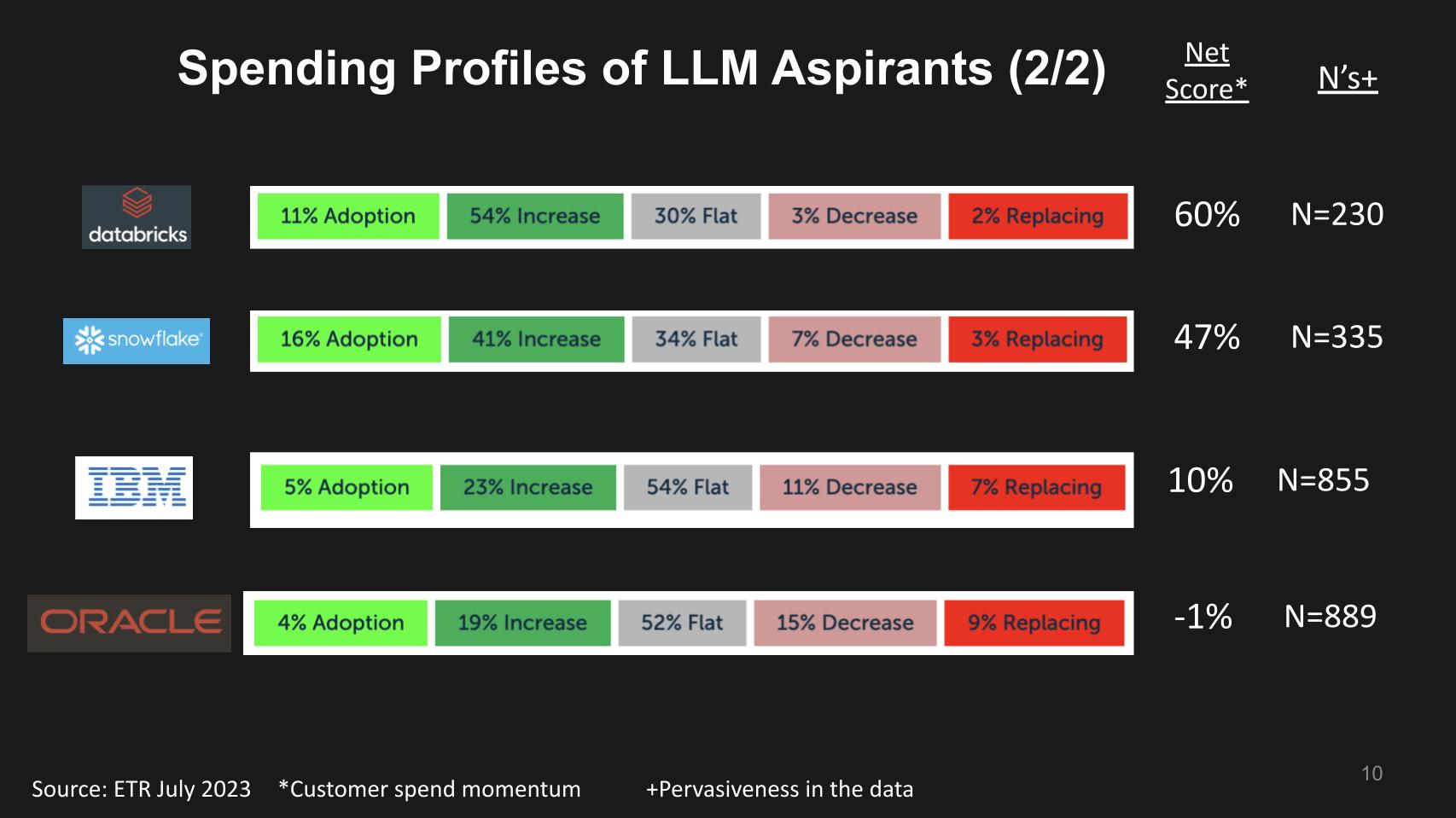

Tracking Some Key Data Players

Below we show the same data for Databricks, Snowflake, IBM and Oracle – some of the key data platform names. Databricks with a very solid Net Score of 60% has taken over the top spot from Snowflake at 47%. Although Snowflake has a bigger market presence. But clearly Databricks is converging in on the traditional domain of Snowflake. IBM and Oracle as you see have lower Net Scores of 10% and -1% respectively. Both with large N’s in the data set.

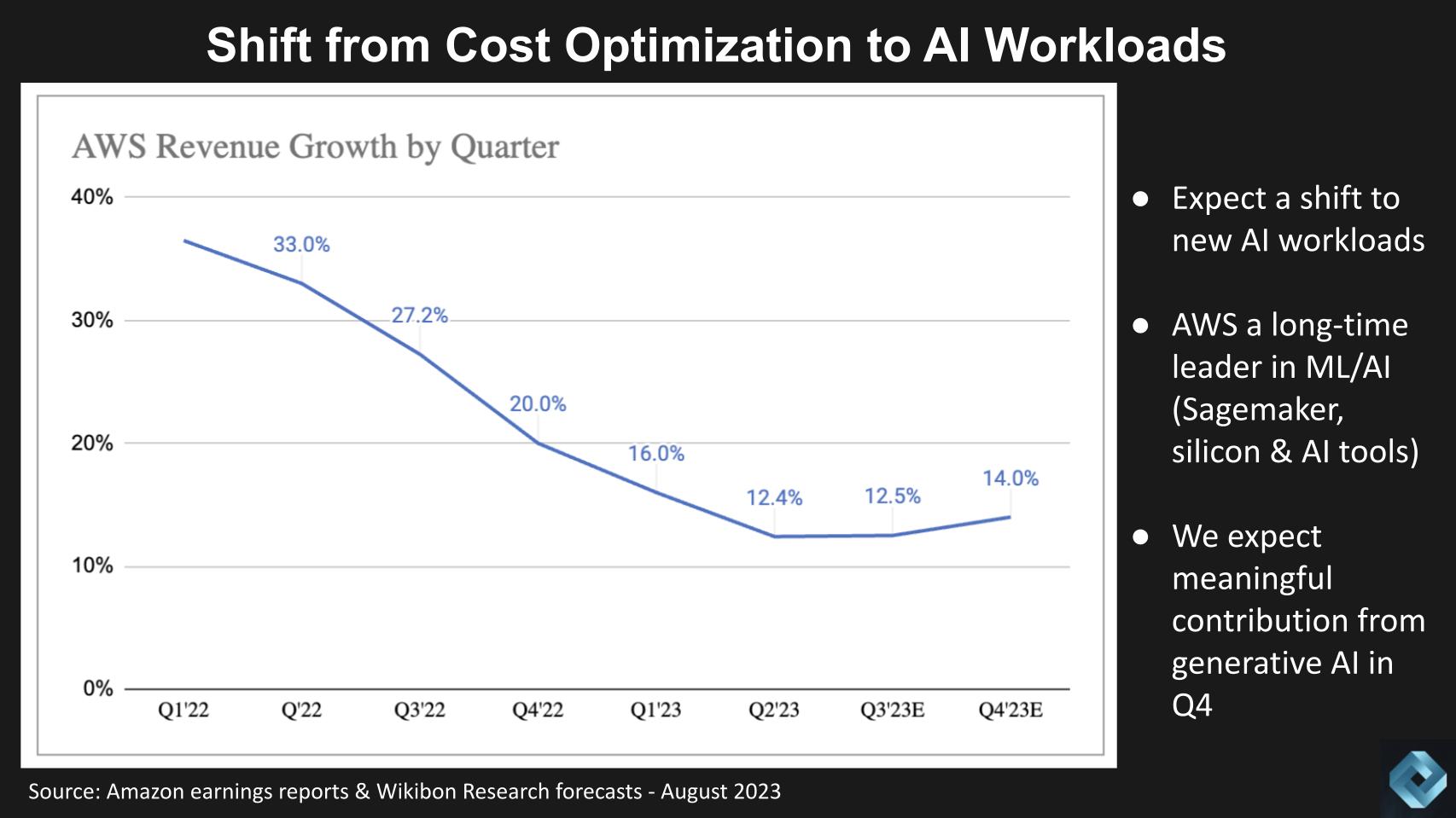

When will GenAI Show up in the Income Statement?

We expect spending on AI generally and GenAI specifically will begin to have a visible impact in the second half of 2023.

Using AWS as a proxy – the chart below shows AWS’ revenue growth rates going back to Q1 2022. We think the deceleration will stabilize in Q3 and our current forecast calls for a re-acceleration of growth in Q4 due to AI as a tailwind and Q4 seasonality. In particular we see GenAI driving more compute and storage as well as ancillary spend in data platforms and associated tooling.

There are risks to this scenario, including the macro environment and the law of large numbers kicking in, as well as competition but our current thinking is we’re at the tail end of cloud optimization and shifting to new workload enablement. .

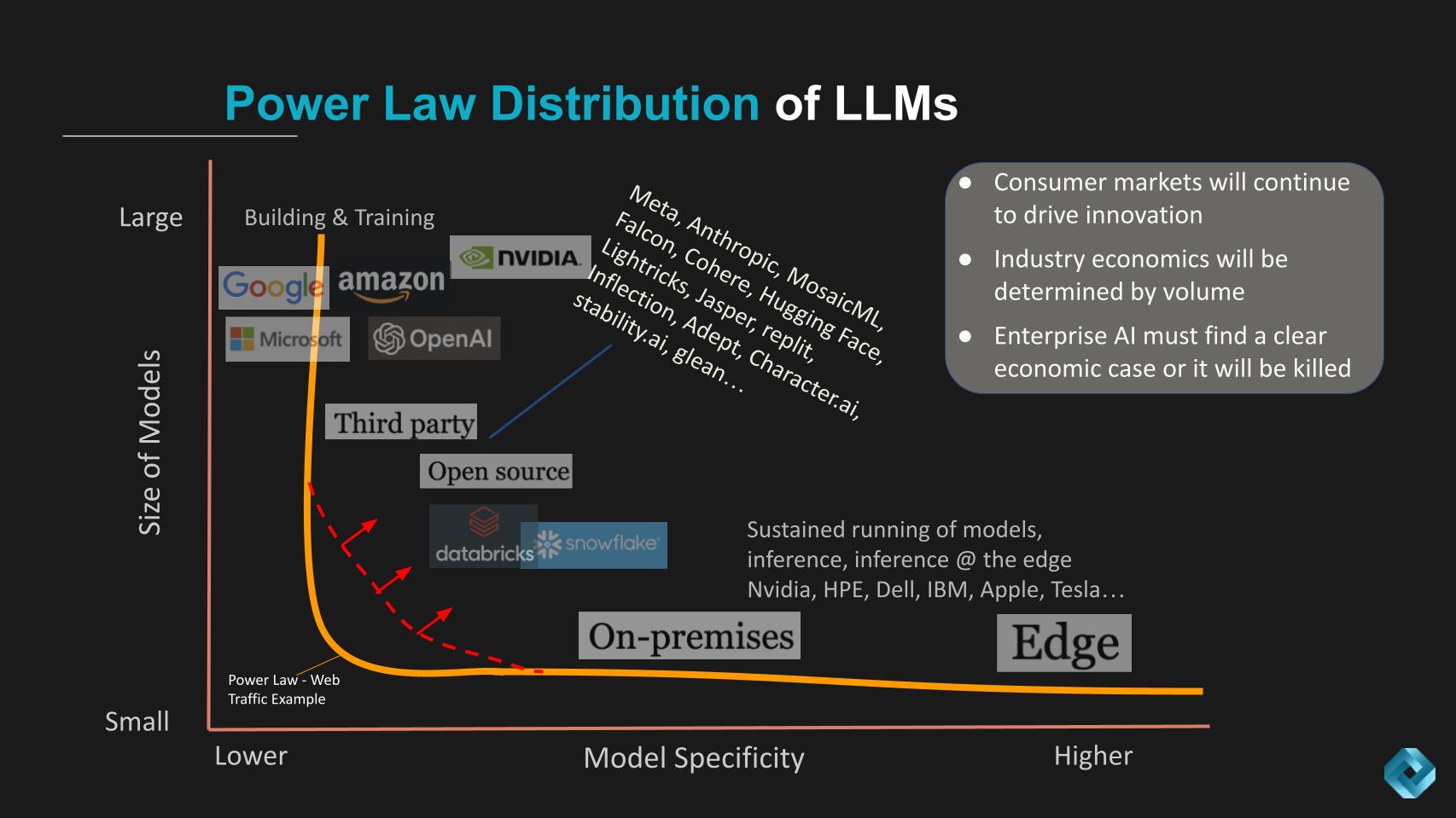

The Power Distribution of GenAI

John Furrier often talks on theCUBE about power laws. We’re going to close by looking at how we see a modified power law distribution of large language models.

A power law distribution is a statistical relationship between two quantities. The simple way to think of a power law distribution is the 80/20 rule. For example, 80% of our sales come from 20% of the products in our portfolio. On the chart below we’re taking liberties with the concept and saying few companies will build the largest language models. Most LLMs will live a the long tail on the X axis will be very specific to industry and these will be smaller in size.

Moreover, edge deployments will be plentiful, highly sensitive to latency, economics and power consumption.

Several points we’d like to make here:

First we believe that enterprise tech innovation continues to be driven by consumer volumes. PC chips, data prowess from search and social media, flash storage and more recently, gaming with Nvidia…all found their way into the enterprise via the consumer route.

The big cloud and consumer brands we believe will dominate the largest model space and the sustained running of models. Whereas inference will happen on-prem and at the edge.

What’s different above from, for example, the Web where the power law curve is like a wall straight down with no torso (the orange line following the Y axis), the LLM space we believe will be pulled up and to the right as shown by the red dotted line. In this area, we believe open source and 3rd party tools will fill the gap, along with cloud partners like Snowflake and Databricks.

The on-prem incumbents like Dell, HPE and IBM will succeed to the extent that they’re able to leverage LLM diversity and deploy it in their go to market models…in a manner that is as simple as the cloud that is more controlled and cost effective for their specific use cases. Importantly, we believe that enterprise AI will demand clear ROI and economic value or projects will die on the vine.

As we said earlier, we believe that primary value will come from headcount reductions.

Meanwhile, we believe that inference at the edge will be dominated by architectures built on low cost, low power, high performance systems – very often Arm based designs, that have massive volume. Think Tesla and Apple. We believe that the economics at the edge will eventually find their way into the enterprise and be a disruptive force.

It may take the better part of the decade but the economics of enterprise IT, since the PC disrupted the mainframe, have been driven by consumer volumes and we think this wave will be no different. AI + data + volume economics will determine the fundamental structure of the industry in the coming years.

That’s a bet we think is worth making in whatever industry you’re in. Applying it however will require careful thought and deep thinking…not AI washing.

Keep in Touch

Many thanks to Alex Myerson and Ken Shifman on production, podcasts and media workflows for Breaking Analysis. Special thanks to Kristen Martin and Cheryl Knight who help us keep our community informed and get the word out. And to Rob Hof, our EiC at SiliconANGLE.

Remember we publish each week on Wikibon and SiliconANGLE. These episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com | DM @dvellante on Twitter | Comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail.

Watch the full video analysis:

Note: ETR is a separate company from Wikibon and SiliconANGLE. If you would like to cite or republish any of the company’s data, or inquire about its services, please contact ETR at legal@etr.ai.

All statements made regarding companies or securities are strictly beliefs, points of view and opinions held by SiliconANGLE Media, Enterprise Technology Research, other guests on theCUBE and guest writers. Such statements are not recommendations by these individuals to buy, sell or hold any security. The content presented does not constitute investment advice and should not be used as the basis for any investment decision. You and only you are responsible for your investment decisions.

Disclosure: Many of the companies cited in Breaking Analysis are sponsors of theCUBE and/or clients of Wikibon. None of these firms or other companies have any editorial control over or advanced viewing of what’s published in Breaking Analysis.